Update: 11:06 AM EST: Paragraph 7 added to show the probability of the driver having a medical emergency. California Highway Patrol saw the vehicle and noted the driver was awake after catching up to the car.

A Tesla Model Y with a sleeping driver was recently spotted on the I-15 Freeway near Temecula, California, which proves that people and media still have a vast misunderstanding and irresponsible tone regarding the capabilities of semi-autonomous vehicles.

According to a report from KTLA 5, a woman in a Tesla Model Y was followed by another driver for more than fifteen minutes on the California interstate in an attempt to wake the woman who was taking advantage of the automaker’s semi-autonomous driving functions up.

The report and the incident prove there are still huge misunderstandings in the capabilities of semi-autonomous driving suites, including Tesla’s Full Self-Driving and Autopilot, which require users to remain vigilant and be prepared to take over the vehicle at any point.

Drivers utilize semi-autonomous vehicle functionalities irresponsibly often, and social media has proven time and time again that people take advantage of the capabilities, even though they are not fully autonomous.

It is no secret that people and companies have utilized whatever they can to alleviate themselves of the responsibility of paying attention while the car operates some tasks on its own. With the introduction of advanced driver assistance systems (ADAS) over the past several years, drivers have taken advantage of the functions to instead play on their phones, read books, eat food, or even catch up on sleep.

Tesla’s camera-based driver monitoring system goes through the cellphone test

However, the risks that come with this behavior are potentially catastrophic. For one, those who use these functions irresponsibly put themselves and every other driver on the road at risk because if the vehicle needs assistance or encounters a situation where it would not react safely, the driver is responsible for taking over. Additionally, if an accident occurs, it can be framed as Tesla’s, or any other manufacturer’s fault, depending on the vehicle used, and instances like this can set the future of semi-autonomous and autonomous driving back years due to skepticism.

There is the possibility that the driver had some type of medical emergency or accidentally fell asleep, in which the Tesla’s functionalities kept the operator and others safe. Police stated the driver was caught up to two minutes after receiving calls about the driver, and the driver was attentive at this time.

However, the media’s portrayal of the situation also proves that many are widely uninformed regarding the capabilities of Teslas. While Tesla’s Full Self-Driving suite has caused controversy over its name, the automaker continues to remind those who use it to remain vigilant, as the cars cannot truly drive themselves.

In Tesla’s FAQ section of the Autopilot and Full Self-Driving page, the company answers the question, “Do I still need to pay attention while using Autopilot?:”

“Yes. Autopilot is a hands-on driver assistance system that is intended to be used only with a fully attentive driver. It does not turn a Tesla into a self-driving car nor does it make a car autonomous.

Before enabling Autopilot, you must agree to “keep your hands on the steering wheel at all times” and to always “maintain control and responsibility for your car.” Once engaged, Autopilot will also deliver an escalating series of visual and audio warnings, reminding you to place your hands on the wheel if insufficient torque is applied. If you repeatedly ignore these warnings, you will be locked out from using Autopilot during that trip.

You can override any of Autopilot’s features at any time by steering, applying the brakes, or using the cruise control stalk to deactivate.”

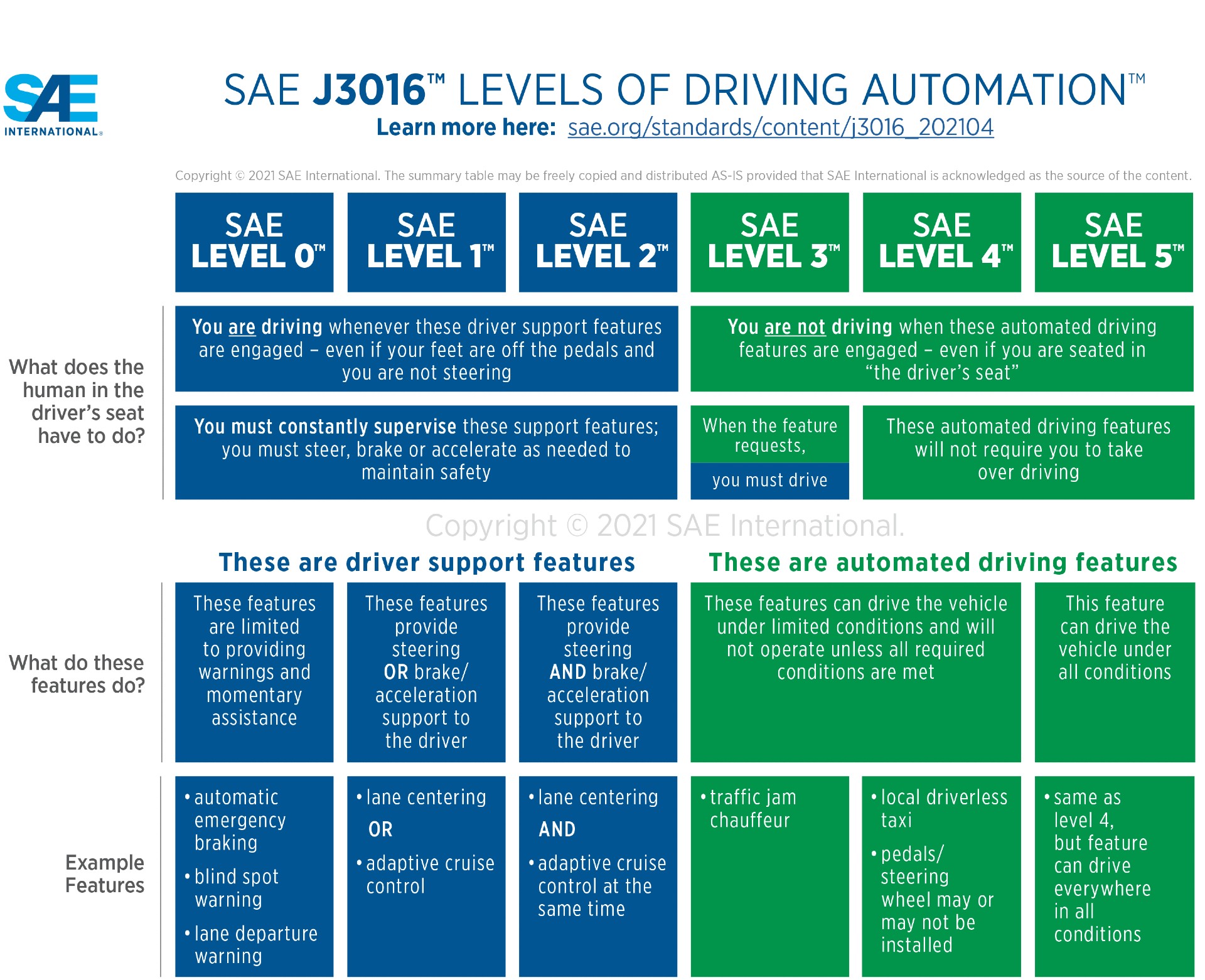

Media labeling the vehicle as “a self-driving Tesla” is a disservice to people and the company. Teslas do not drive themselves, as the vehicles are defined as Level 2, according to the Society of Automotive Engineers Levels of Driving Automation. Level 2 systems reiterate that the driver is still responsible for driving the car when these systems are activated. “You must constantly supervise these support features,” the SAE says. Level 3 to Level 5 systems maintain that the operator is not driving the car, but Level 5 systems are the only ones that are explicitly labeled as “self-driving.”

“This feature can drive the vehicle under all conditions,” the SAE table states.

Credit: Society of Automotive Engineers

Recent ratings by Consumer Reports showed that Tesla’s biggest flaw was driver monitoring. Many systems use cabin-facing cameras to monitor eye behavior to ensure the operator is keeping their eyes on the road. Teslas use a series of audible and visual cues to alert drivers of their inattentiveness, and steering wheel sensors make sure the driver keeps their hands on the wheel.

However, various cheat devices have been marketed across the internet, and in this instance, the driver appears to have their hands on the wheel while they are dozed.

Tesla activated camera-based driver monitoring in May 2021. “The cabin camera above your rearview mirror can now detect and alert driver inattentiveness while Autopilot is engaged,” Tesla said in the notes. Tests of Tesla’s driver monitoring tests showed the system was effective in some instances, especially when looking at cell phones, with alerts coming in 15 seconds.

The potential irresponsibility of users puts major risks to those on the road and the companies that develop these driver assistance programs. While there are workarounds through the previously-mentioned cheat devices, people have to know their irresponsibility could cost them, or others, their lives.

I’d love to hear from you! If you have any comments, concerns, or questions, please email me at joey@teslarati.com. You can also reach me on Twitter @KlenderJoey, or if you have news tips, you can email us at tips@teslarati.com.